Steady as a "Veteran Driver": Huawei QianKun Intelligent Driving New Version Revealed

28 cooperative models, covering a full product matrix from 150,000-yuan family cars to million-yuan luxury vehicles.

Huawei's QianKun intelligent driving system has been installed in over 1 million vehicles.

Huawei's cumulative lidar shipments have exceeded 1 million units.

The P2P parking-to-parking navigation assistance feature has been used a total of 10 million times.

The cumulative assisted driving mileage has reached 4 billion kilometers.

In August 2025, Huawei Qiankun Intelligent Driving achieved four major milestones. It is worth mentioning that it has been just over four years since ADS 1.0 was launched, and the P2P feature has only been commercially available for eight months. In such a short period, its usage frequency has already exceeded ten million times.

At this critical juncture, Gaishi Auto took the lead in early September to experience the Wenjie M9 equipped with Huawei Qian Kun Intelligent Driving ADS 4.0 ahead of the official upgrade of Huawei Qian Kun Intelligent Driving ADS 4.0. Starting from Shanghai Xingguo Hotel, passing through the bustling streets of Wukang Building, the busy traffic of the Beijing-Shanghai Expressway, and finally arriving at Tongji University's Jiading Campus test site, a complex road condition challenge covering the entire "parking space to parking space" chain was completed.

Of course, we are not satisfied with this. To challenge more complex and extreme scenarios, the official testing routes specially include road conditions such as "unmarked roads" and "interchange roundabouts." Additionally, extreme challenges like "AEB testing," "rain and fog AEB testing," "driver incapacitation assistance testing," and "parking with suspended obstacles testing" have been set up at the Tongji University test track.

In fact, its performance is indeed impressive.

What kind of intelligent driving do we really need?

If we pose this question to any ordinary driver who navigates congested streets daily, frequently stops and starts, deals with unexpected situations, and occasionally drives long distances, the answer would likely be highly consistent: it should be like an experienced, calm, and reliable "veteran driver"—not only able to accurately follow instructions, but also capable of understanding complex environments and making efficient, safe, and reassuring decisions.

However, knowing is easy but doing is difficult. From the promotion of assisted driving implementation scenarios by various companies, it is not hard to see that urban scenarios are far more challenging than highways, and they have long been regarded as the ceiling for L2-level assisted driving.

The reason is easy to understand. Compared to highways, which have clear rules and a closed environment, urban traffic undoubtedly serves as the most comprehensive stress test for intelligent driving systems.

This place is filled with infinite uncertainty, complexity, and sudden changes. Pedestrians suddenly dart out from visual blind spots, non-motorized vehicles weave flexibly through traffic, unexpected congestion occurs at intersections, road topology changes temporarily due to construction, there are various traffic signs and sky signs, and even determining whether the car in front is temporarily stopped or about to open its door to let passengers out—all these require instantaneous perception, decision-making, and execution.

It requires not only precise control of the vehicle, but also a deep understanding and anticipation of the intentions within the surrounding environment.

To develop a city navigation assistance system that offers a smooth user experience in such complex urban environments, it not only requires vast amounts of data to understand countless typical scenarios, but also demands an almost human-like systematic thinking and generalization capability.

Specifically, an excellent intelligent driving system requires two core capabilities: first, the verification, optimization, activation, and continuous operation of specific roads, that is, being familiar with the "temperament" of every street; second, a higher-level generalization ability, which does not rely on rote memorization but can infer and adapt like a human to handle previously unseen scenarios.

It is already extremely difficult to achieve the former, as urban roads change rapidly; a road that is clear and unobstructed in the morning may become completely different in the afternoon due to temporary construction. This is precisely the fundamental reason why early solutions that overly relied on high-precision maps encountered bottlenecks.

Not to mention that highways, which may seem simple and straightforward, actually conceal numerous challenges: handling unexpected situations during high-speed cruising, recognizing cones and maintaining lanes in construction zones, ensuring efficient passage at toll stations, dealing with adverse weather conditions such as heavy rain and strong winds, as well as addressing driver fatigue during long-distance trips. Advanced driver-assistance systems must not only remain stable and controllable in highway environments, but also find the optimal balance between efficiency and safety, while being able to cope with a variety of edge-case scenarios.

Currently, the industry has gradually converged at the basic algorithm level, with major manufacturers adopting a BEV+Transformer perception model architecture, complemented by a series of sensors such as LiDAR, millimeter-wave radar, and cameras, to achieve stable detection of general obstacles. In terms of path planning and vehicle control, different companies may have slight differences in their strategies, but the goal is the same: to safely and smoothly transport users from the starting point to the destination.

Navigating freely in the bustling city

This "from one parking space to another" ultimate challenge seems to be systematically conquered by Huawei's QianKun Intelligent Driving ADS 4.0. As early as the previous generation ADS 3.0, Huawei took the lead in proposing the complete concept of "parking space to parking space," aiming to enable vehicles to travel from any starting parking space to any target parking space without the need for users to preset routes or for the system to undergo mechanical learning, truly achieving the capability to park wherever there is a space, and becoming smarter with use. The latest 4.0 version has further deepened the experience in all dimensions based on this foundation.

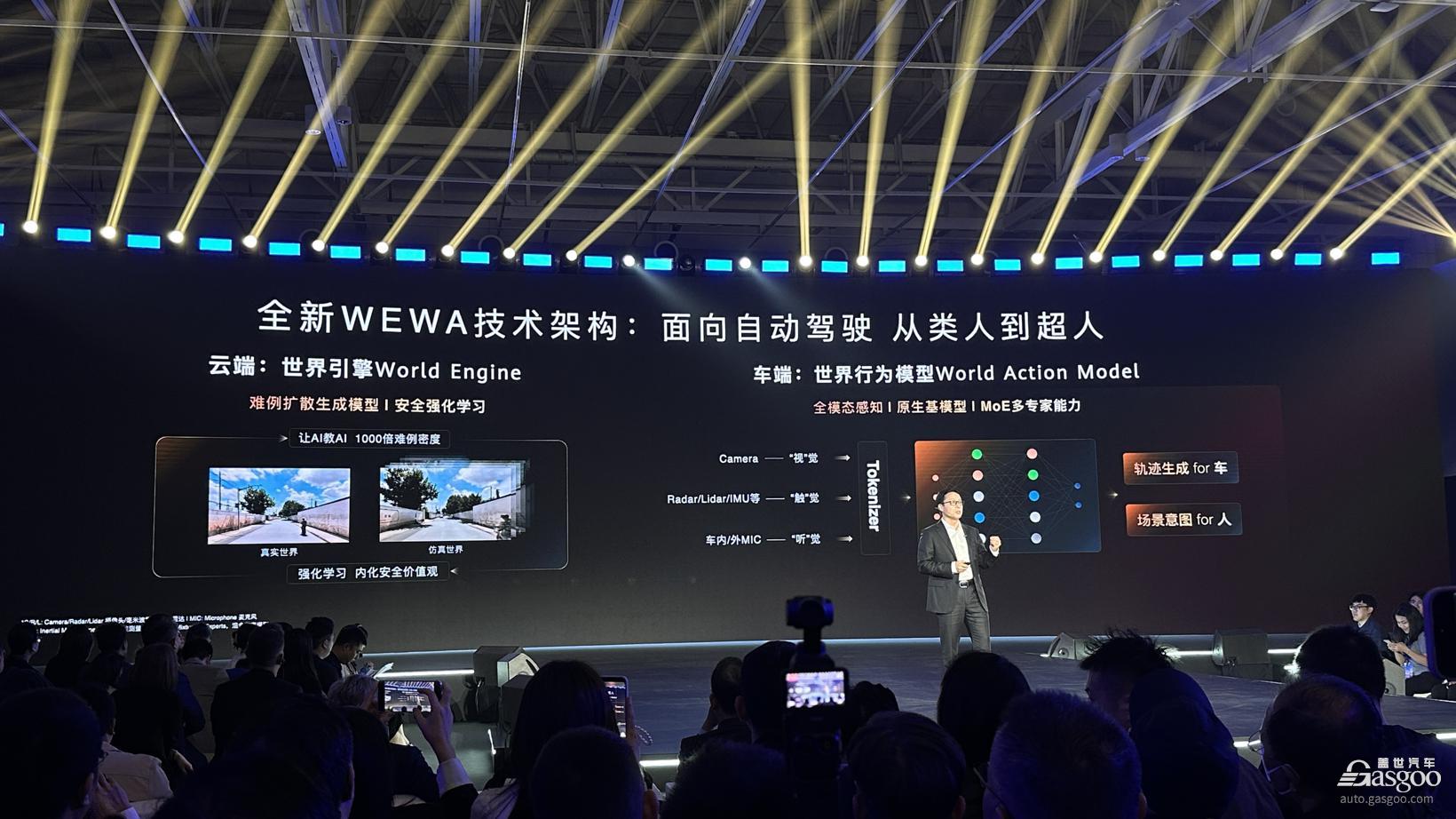

On a Saturday afternoon during the ongoing summer vacation, I personally experienced this system near the iconic and bustling Wu Kang Building area in Shanghai. The streets here are crowded with people; the old town’s roads are narrow and filled with numerous traffic participants, making it an excellent proving ground to test the "quality" of an intelligent driving system. However, Huawei Qian Kun Intelligent Driving 4.0, equipped with the new WEWA architecture, employs a collaborative mechanism of "cloud training + on-board decision-making," reducing end-to-end latency by 50%, decreasing hard braking rate by 30%, significantly lowering the number of unnecessary lane changes, and improving traffic efficiency by 20%. To put it more plainly, it has become almost indistinguishable from the driving logic we adopt in our daily driving.

Give a few examples.

In congested road sections, when the vehicle ahead comes to a stop, the system can determine whether it is a temporary pause in traffic flow or a stop for picking up or dropping off passengers. This allows the system to decide whether to wait patiently or to seize the opportunity for a steady lane change, thus avoiding unnecessary waiting anxiety.

When facing Shanghai’s common multi-branch intersections, large roundabouts, and unprotected left turns—extremely complex scenarios—the system demonstrates strong perception and decision-making capabilities. It can accurately recognize complex road topologies and traffic light information, selecting the correct route for passage.

When merging from an elevated ramp into a congested main road, it can accurately grasp the gaps in the traffic flow, smoothly completing the merging action. The entire process is decisive and natural, without any hesitation or stiffness.

When making an unprotected left turn, it does not wait endlessly for an absolutely safe "vacuum period," but instead, like a human driver, actively looks for reasonable gaps and proceeds confidently while ensuring safety.

In addition, it provides ultimate protection against sudden pedestrian crossings. When an electric bicycle suddenly dashes out from behind a line of parked cars by the roadside, the system almost instantly identifies the situation and decisively brakes to a stop.

Certainly, a brief test drive cannot exhaust all the extreme scenarios that may be encountered in urban commuting. However, what Huawei QianKun Intelligent Driving ADS 4.0 has demonstrated has already far surpassed basic lane keeping or adaptive cruise control, reaching a level of truly understanding the urban traffic environment. It no longer merely executes simple driving instructions but begins to interpret the traffic environment like a human driver, predict the behavior of other road users, and make safe and efficient decisions.

The ultimate defense line in extreme scenarios

Compared to its impressive performance on urban roads, it is difficult to provide ADS 4.0 with many opportunities to demonstrate its capabilities during the short journey from the starting point of G2 Beijing-Shanghai Expressway—Jiangqiao Toll Station—to the exit at G15 Jiajin Expressway Cao'an Highway Toll Station, which takes only a few minutes.

Perhaps influenced by the heavy traffic flow that day, or maybe due to numerous feedbacks about "frequent lane changes being too aggressive" in version 3.0, or the engineers' special settings for the AITO M9, the highway experience that day was far more "boring" than expected. I didn't get to experience the cone recognition feature in road repair sections, nor did I encounter stormy and severe weather. The entire journey was a smooth cruise at 80-90 kilometers per hour, without overtaking a single vehicle.

The only two situations that can be considered sudden occurrences are perhaps when a slow vehicle merges from the right side while there is still a certain safe distance from the preceding vehicle. In this case, ADS 4.0 does not choose to accelerate but prioritizes yielding. Only when it detects that the other vehicle continues to slowly encroach over the lane line does it decelerate again and, after timely assessing the safe distance in the left lane, change lanes to avoid.

Another instance occurred when exiting the highway toll station, where the vehicle automatically selected the leftmost vacant ETC lane and passed through without manual intervention. However, in order to reach the right-side ramp afterward, it completed three consecutive lane changes within 10 seconds. This maneuver met the ADS 4.0 technical requirement of "20% improvement in traffic efficiency," but it raises potential regulatory and control boundary issues—the system prioritizes minimizing time cost, while its assessment of the "risk weight of consecutive lane changes" still needs optimization.

It is precisely to answer these ultimate safety questions, which arise from daily life yet transcend it, that the subsequent extreme challenges conducted in closed test tracks become so crucial and deeply moving. The true value of cutting-edge advanced driver assistance systems lies not only in alleviating the fatigue of everyday driving, but more importantly, in serving as the last line of defense to protect lives in those rare and extreme moments of danger.

In the "Rain and Fog AEB Test," the artificially simulated heavy rain environment reduced visibility to less than 50 meters, with everything appearing as a white blur. In this situation, where reliance on perception beyond human senses was almost absolute, the 192-line LiDAR mounted on the roof of the AITO M9 played a decisive role. It was able to penetrate the rain and fog, accurately detecting a suddenly appearing stationary white vehicle ahead. At the moment when even a human driver might not have noticed the danger, the system had already triggered emergency braking, significantly extending the response distance and avoiding a collision.

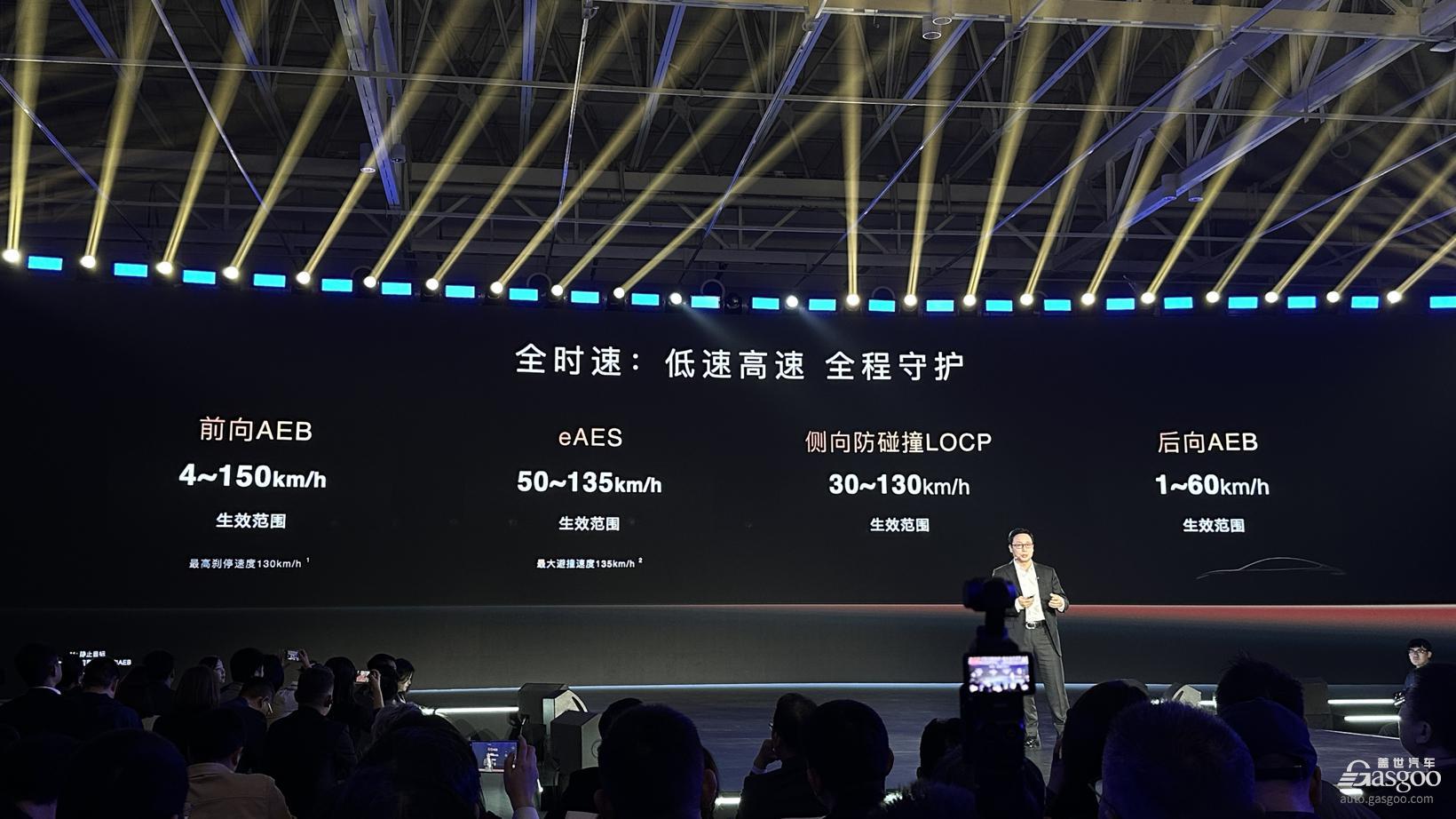

Supporting all of this is Huawei’s pioneering “Five-Dimensional Safety” concept. This system enables active safety intervention across the full speed range of 0–130 km/h, achieving 360° perception coverage with no blind spots. From extreme weather conditions such as heavy rain, fog, and sandstorms to complex road scenarios like rural paths without lane markings, it handles them all with ease. To date, this system has prevented collisions over 2.54 million times in real-world situations, with challenging nighttime scenarios accounting for as much as 37% of these cases. These are no longer cold laboratory statistics, but countless family stories that could have turned out differently.

In the face of more extreme "driver incapacitation" scenarios, the ADS 4.0 response plan showcases profound humanistic care and technical prowess. Whether in Intelligent Navigation (NCA), Lane Cruise Control (LCC), Adaptive Cruise Control (ACC), or even manual driving mode, once the in-cabin laser vision fusion sensors detect that the driver's hands have left the steering wheel and determine incapacitation (such as a sudden medical condition), the system will immediately take over vehicle control. It smoothly decelerates, activates the hazard warning lights, and after ensuring the safety of the surrounding environment, automatically pulls the vehicle over and stops it safely. It then automatically calls the emergency center via the onboard system, reporting the vehicle's location and situation, all without any human intervention. This is not just a life-saving system but a responsible intelligent guardian.

Although this brief public road experience did not allow for a full exploration of all the capabilities of ADS 4.0—especially its so-called P2P 2.0 intelligent parking function, which is said to remember parking spots across different floors and intelligently recommend nearby parking lots—the precise demonstrations of extreme scenarios within the test track were already sufficient to clearly outline its core value.

Not only driving embodies "human nature"

On the same day, the official also offered multiple models upgraded to the HarmonySpace 5 Harmony Cockpit, including the million-level Zunjie S800, allowing everyone to experience its ultimate cockpit experience statically.

Sitting in the rear of the Zunjie S800, you can see its innovative large screen soft partition design in the middle, equipped with a central rotor baffle. When the 40-inch automotive-grade large screen is lowered, it maximizes the separation from the front row, complemented by dual zero-gravity seats to create a more luxurious and private rear seating space.

Interestingly, Huawei has integrated the HUAWEI SOUND Extraordinary Series into the HarmonyOS cockpit for the first time, with a maximum configuration of 43 speakers, delivering a 7.5.10 ultra-surround sound field. What is truly astonishing is its adaptive sound field control algorithm. By emitting "negative sound waves" that are phase-inverted to the noise, the system achieves physical-level noise cancellation. It supports front and rear sound field isolation—passengers in the front can listen to symphonies while those in the rear watch action movies, with a sound energy isolation rate of up to 99%, ensuring no mutual interference.

With the enhancement of the "Shanhai Image Quality" engine on the visual level, the sensory effect of ordinary 720P videos is elevated to 1440P quality. Color transitions are smoother, dark details are clearer, and combined with the million-pixel intelligent car lights projecting a 100-inch color display outside the vehicle, the cabin instantly transforms into a mobile private cinema and open-air theater.

As Huawei's latest generation intelligent cockpit system, it is clearly not just a presentation of function integration, but an attempt to become a truly "understanding" travel companion—from voice to sound, from screen to gestures, it redefines "human-vehicle integration" in a nearly human-like interactive way.

HarmonySpace 5 adopts a new Mixture of Large Model Agent (MoLA) architecture, integrating the capabilities of general large models with AI capabilities in domains such as music and film, thereby building a rich hardware and software ecosystem and offering diverse scenario experiences across multiple spaces.

On the Zunjie S800, HarmonyOS 5 not only expands its “intuitive” voice interaction capabilities, but also applies gesture recognition to more scenarios. The laser sensor located at the center of the cabin ceiling can accurately identify gestures from rear-seat passengers: sliding your arm inward quietly closes the car door; waving forward or backward changes the tint of the privacy glass. If you point at the reading lamp and call out “Xiao Yi,” the reading light turns on instantly. This almost magical control method responds with remarkable sensitivity, operating so smoothly it feels as if the system has already anticipated your intentions.

Certainly, extreme sensitivity also comes with real-world challenges. In actual experience, occasional forward leaning or large movements may be misinterpreted as command inputs, causing the glass to change color unexpectedly. This indicates that gesture interaction still has room for improvement in terms of adaptability to different scenarios, but the future it points to is clear enough.

The true breakthrough of the HarmonySpace 5 cockpit lies not in the perfection of any single technology, but in its integration of AI large models, acoustic engineering, visual computing, and interactive sensing into a warm and living "travel companion." It understands speech, distinguishes sounds, comprehends gestures, and can even perceive intentions. It is no longer a cold hardware assembly, but a companion gradually learning to "connect with humanity."

Summary: Translate the above content into English, output the translation directly, no explanation needed.

Returning to the fundamental question of "What kind of assisted driving capabilities do we really need," Huawei's Qian Kun Intelligent Driving 4.0 provides us with an important reference. The intelligent driving that users need is not a flashy label, but rather an "ease of mind" integrated into daily life. It might be the calm precision of maneuvering on a rural road, the "electronic eye" penetrating through rain during a stormy night, and more importantly, the decisive emergency braking in a life-or-death moment.

It should not be a fully autonomous driving system that completely replaces humans, but rather a friendly, collaborative human-machine co-driving system—a practical tool that truly enhances driving safety and reduces the driving burden, and a living entity capable of continuous learning and evolution. Huawei QianKun Intelligent Driving 4.0 seems to show us this possibility.

As Huawei Intelligent Automotive Solution BU CEO Jin Yuzhi said: "Our vision remains unchanged—to bring intelligence to every car. This is our mission, and our ultimate goal is to make travel safer and life better." This vision is gradually becoming a reality through technological achievements like Huawei's Qiankun Intelligent Driving 4.0. As technology continues to mature and regulations are gradually improved, we can look forward to truly achieving a zero-casualty, zero-anxiety intelligent travel experience in the near future.

【Copyright and Disclaimer】The above information is collected and organized by PlastMatch. The copyright belongs to the original author. This article is reprinted for the purpose of providing more information, and it does not imply that PlastMatch endorses the views expressed in the article or guarantees its accuracy. If there are any errors in the source attribution or if your legitimate rights have been infringed, please contact us, and we will promptly correct or remove the content. If other media, websites, or individuals use the aforementioned content, they must clearly indicate the original source and origin of the work and assume legal responsibility on their own.

Most Popular

-

A Look at the Material Suppliers Behind SpaceX

-

Two Major Chemical Giants to Shut Down, Sell Again

-

BASF, Selling Again!

-

BASF Cuts Core Business As It Exits All Non-Core Operations, Triggering A New Round Of Industry Restructuring

-

Gas Explosion Accident at Shanxi Tongzhou Group Liushenyu Coal Mine Results in 82 Deaths